|

| January 19, 2016 | Volume 12 Issue 03 |

Designfax weekly eMagazine

Archives

Partners

Manufacturing Center

Product Spotlight

Modern Applications News

Metalworking Ideas For

Today's Job Shops

Tooling and Production

Strategies for large

metalworking plants

Algorithm helps turn smartphones into 3D scanners

While 3D printers have become relatively cheap and available, 3D scanners have lagged well behind. Now an algorithm developed by Brown University researchers may help bring high-quality 3D scanning capability to off-the-shelf digital cameras and smartphones.

"One of the things my lab has been focusing on is getting 3D image capture from relatively low-cost components," said Gabriel Taubin, associate professor of engineering and computer science. "The 3D scanners on the market today are either very expensive or are unable to do high-resolution image capture, so they can't be used for applications where details are important."

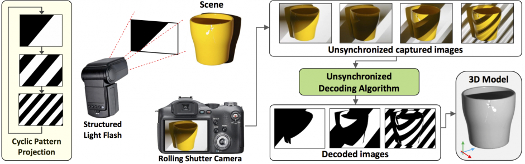

Most high-quality 3D scanners capture images using a technique known as structured light. A projector casts a series of light patterns on an object, while a camera captures images of the object. The ways in which those patterns deform over and around an object can be used to render a 3D image. But for the technique to work, the pattern projector and the camera have to be precisely synchronized, which requires specialized and expensive hardware.

The algorithm Taubin and his students have developed, however, enables the structured-light technique to be done without synchronization between projector and camera, which means an off-the-shelf camera can be used with an untethered structured-light flash. The camera just needs to have the ability to capture uncompressed images in burst mode (several successive frames per second), which many DSLR cameras and smartphones can do.

The researchers presented a paper describing the algorithm last month at the SIGGRAPH Asia computer graphics conference.

The problem in trying to capture 3D images without synchronization is that the projector could switch from one pattern to the next while the image is in the process of being exposed. As a result, the captured images are mixtures of two or more patterns. A second problem is that most modern digital cameras use a rolling shutter mechanism. Rather than capturing the whole image in one snapshot, cameras scan the field either vertically or horizontally, sending the image to the camera's memory one pixel row at a time. As a result, parts of the image are captured at slightly different times, which also can lead to mixed patterns.

"That's the main problem we're dealing with," said Daniel Moreno, a graduate student who led the development of the algorithm. "We can't use an image that has a mixture of patterns. So with the algorithm, we can synthesize images -- one for every pattern projected -- as if we had a system in which the pattern and image capture were synchronized."

After the camera captures a burst of images, the algorithm calibrates the timing of the image sequence using the binary information embedded in the projected pattern. Then it goes through the images, pixel by pixel, to assemble a new sequence of images that captures each pattern in its entirety. Once the complete pattern images are assembled, a standard structured-light 3D reconstruction algorithm can be used to create a single 3D image of the object or space.

In their SIGGRAPH paper, the researchers showed that the technique works just as well as synchronized structured-light systems. During testing, the researchers used a fairly standard structured-light projector, but the team envisions working to develop a structured-light flash that could eventually be used as an attachment to any camera, now that there's an algorithm that can properly assemble the images.

"We think this could be a significant step in making precise and accurate 3D scanning cheaper and more accessible," Taubin said.

The research was funded by the National Science Foundation.

Source: Brown University

Published January 2016

Rate this article

View our terms of use and privacy policy